Building Your First Agentic AI in .NET Core

From simple prompts to autonomous, tool-using agents with C# and .NET

Agentic AI is about going one step further. Instead of just answering questions, an agent can:

Take a goal (e.g., “summarize all reports and write a brief”)

Decide what to do next

Use tools (APIs, files, databases)

Observe results and iterate

Stop only when the task is done

In this post, you will build a simple Agentic AI in .NET Core: a console app where an LLM-powered agent can call tools to work with files.

We will focus on the architecture and patterns; you can plug in your preferred LLM provider (OpenAI, Azure OpenAI, etc.) later.

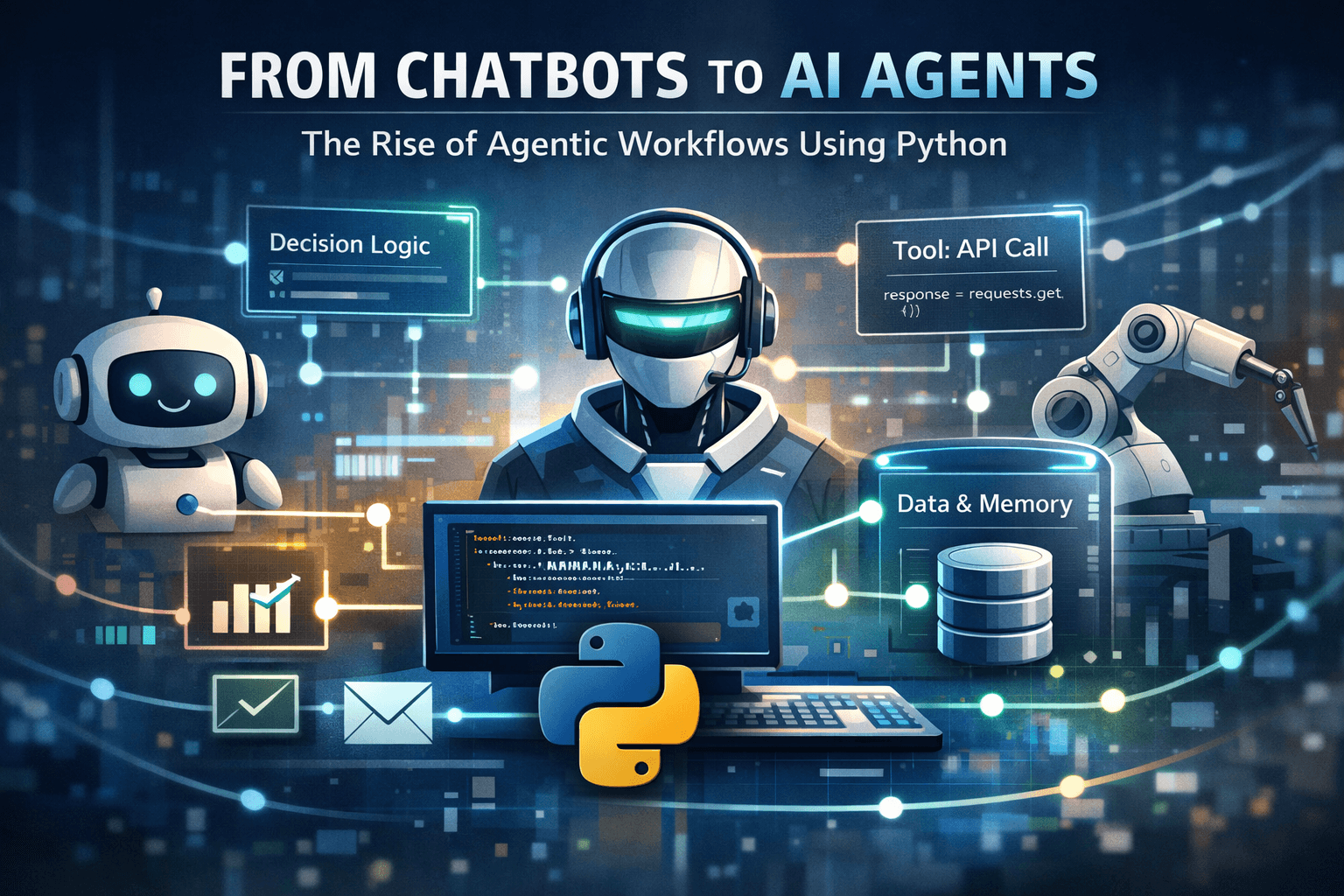

1. From chatbot to agent: what actually changes?

A typical chatbot integration:

User: "Explain dependency injection in .NET"

App: [send prompt to LLM]

LLM: "Dependency injection is …"

App: show answer, stop.

An agentic integration looks more like this:

Goal: "Summarize all .txt files in a folder and write a report."

Loop:

1. Agent decides: "I should list the files."

2. Agent calls tool: list_files(path=".")

3. Agent sees results and decides next step:

"Now I should read input.txt."

4. Agent calls tool: read_file(path="input.txt")

5. Agent decides: "Now I can write the report."

6. Agent calls tool: write_file(path="report.txt", content="...")

7. Agent says: "Done, report is ready at report.txt."

Key differences:

The agent has a goal, not just a single prompt.

There is a loop: think → act (tool) → observe → repeat.

The LLM decides what to do next, but your code defines what it is allowed to do via tools and guardrails.

Let’s build a minimal version of this in .NET Core.

2. Project setup

Create a new console application:

dotnet new console -n AgenticAiDemo

cd AgenticAiDemo

We will assume everything lives under one namespace:

namespace AgenticAiDemo;

You can later split into folders/projects as needed.

3. Modeling the agent’s context and decisions

An agent needs to track:

The goal

A history of what happened so far

Some simple memory (key-value pairs)

And for each step, we want the LLM to return a decision: which tool to call next (if any), with what arguments, or whether to finish.

Create AgentModels.cs:

using System.Text.Json.Serialization;

namespace AgenticAiDemo;

public class AgentContext

{

public string Goal { get; set; } = string.Empty;

public List<string> History { get; } = new();

public Dictionary<string, string> Memory { get; } = new();

}

public class AgentToolCall

{

[JsonPropertyName("tool")]

public string ToolName { get; set; } = string.Empty;

[JsonPropertyName("arguments")]

public Dictionary<string, string>? Arguments { get; set; }

[JsonPropertyName("isDone")]

public bool IsDone { get; set; }

[JsonPropertyName("finalAnswer")]

public string? FinalAnswer { get; set; }

[JsonPropertyName("reasoning")]

public string? Reasoning { get; set; }

}

We will instruct the LLM to return JSON matching AgentToolCall, for example:

{

"tool": "read_file",

"arguments": { "path": "input.txt" },

"isDone": false,

"finalAnswer": null,

"reasoning": "Now I should read the main file."

}

This keeps the agent loop clean and machine-readable.

4. Tools: how the agent acts on the world

In Agentic AI, a tool is just an action your system is allowed to perform on behalf of the agent: read a file, call an API, write a report, etc.

We will define a simple abstraction plus a registry.

Create Tools.cs:

using System.Text;

using System.IO;

namespace AgenticAiDemo;

public interface ITool

{

string Name { get; }

string Description { get; }

Task<string> ExecuteAsync(Dictionary<string, string>? args);

}

public class ToolRegistry

{

private readonly Dictionary<string, ITool> _tools =

new(StringComparer.OrdinalIgnoreCase);

public void Register(ITool tool)

{

_tools[tool.Name] = tool;

}

public bool TryGet(string name, out ITool? tool)

{

return _tools.TryGetValue(name, out tool);

}

public string DescribeAllTools()

{

return string.Join("\n", _tools.Values.Select(t =>

$"- {t.Name}: {t.Description}"));

}

}

Now let’s implement three concrete tools for our file-summarizing scenario.

4.1. List files tool

namespace AgenticAiDemo;

public class ListFilesTool : ITool

{

public string Name => "list_files";

public string Description => "List .txt files in a folder. Args: path";

public Task<string> ExecuteAsync(Dictionary<string, string>? args)

{

var path = args != null && args.TryGetValue("path", out var p) ? p : ".";

if (!Directory.Exists(path))

{

return Task.FromResult($"Folder '{path}' does not exist.");

}

var files = Directory.GetFiles(path, "*.txt");

if (files.Length == 0)

return Task.FromResult("No .txt files found.");

var sb = new StringBuilder();

sb.AppendLine($"Found {files.Length} .txt files:");

foreach (var f in files)

{

sb.AppendLine(Path.GetFileName(f));

}

return Task.FromResult(sb.ToString());

}

}

4.2. Read file tool

namespace AgenticAiDemo;

public class ReadFileTool : ITool

{

public string Name => "read_file";

public string Description => "Read the content of a .txt file. Args: path";

public async Task<string> ExecuteAsync(Dictionary<string, string>? args)

{

if (args == null || !args.TryGetValue("path", out var path))

return "Missing 'path' argument.";

if (!File.Exists(path))

return $"File '{path}' not found.";

var text = await File.ReadAllTextAsync(path);

return text;

}

}

4.3. Write file tool

namespace AgenticAiDemo;

public class WriteFileTool : ITool

{

public string Name => "write_file";

public string Description => "Write content to a .txt file. Args: path, content";

public async Task<string> ExecuteAsync(Dictionary<string, string>? args)

{

if (args == null

|| !args.TryGetValue("path", out var path)

|| !args.TryGetValue("content", out var content))

{

return "Missing 'path' or 'content' argument.";

}

await File.WriteAllTextAsync(path, content);

return $"Wrote report to '{path}'.";

}

}

With these three tools, the agent can inspect a folder, read content, and write a summary report.

5. LLM client abstraction

We do not want our core agent logic to depend on any specific LLM provider. Instead, we define a minimal interface.

Create LLMClient.cs:

namespace AgenticAiDemo;

public interface ILLMClient

{

Task<string> GetAgentDecisionJsonAsync(string prompt);

}

promptis the text we send to the model (instructions + history + tools).The model must respond with a JSON string we can deserialize into

AgentToolCall.

In this post, we will start with a fake implementation for demonstration and testing. Later, you can replace it with a real HTTP call to your chosen LLM.

6. Implementing the agent loop

Now we tie everything together: the agent takes a goal, builds prompts, calls the LLM for decisions, calls tools, tracks history, and stops when done or when it hits a safety limit.

Create Agent.cs:

using System.Text.Json;

namespace AgenticAiDemo;

public class Agent

{

private readonly ILLMClient _llm;

private readonly ToolRegistry _tools;

private readonly JsonSerializerOptions _jsonOptions;

public Agent(ILLMClient llm, ToolRegistry tools)

{

_llm = llm;

_tools = tools;

_jsonOptions = new JsonSerializerOptions

{

PropertyNameCaseInsensitive = true

};

}

public async Task<string> RunAsync(string goal, int maxSteps = 10)

{

var context = new AgentContext { Goal = goal };

for (int step = 1; step <= maxSteps; step++)

{

Console.WriteLine($"\n[Agent] Step {step}");

var prompt = BuildPrompt(context);

var decisionJson = await _llm.GetAgentDecisionJsonAsync(prompt);

AgentToolCall? decision;

try

{

decision = JsonSerializer.Deserialize<AgentToolCall>(decisionJson, _jsonOptions);

}

catch (Exception ex)

{

context.History.Add($"Failed to parse LLM JSON: {ex.Message}");

continue;

}

if (decision == null)

{

context.History.Add("LLM returned null decision.");

continue;

}

if (!string.IsNullOrWhiteSpace(decision.Reasoning))

{

context.History.Add($"Reasoning: {decision.Reasoning}");

}

if (decision.IsDone)

{

var final = decision.FinalAnswer ?? "(no final answer)";

context.History.Add($"Final: {final}");

return final;

}

if (string.IsNullOrWhiteSpace(decision.ToolName))

{

context.History.Add("No tool selected; stopping.");

break;

}

if (!_tools.TryGet(decision.ToolName, out var tool) || tool == null)

{

context.History.Add($"Unknown tool '{decision.ToolName}'.");

break;

}

var result = await tool.ExecuteAsync(decision.Arguments);

context.History.Add($"Tool {tool.Name} result:\n{result}");

}

return "Agent stopped without reaching a final answer.";

}

private string BuildPrompt(AgentContext context)

{

var historyText = string.Join("\n", context.History);

var toolsDescription = _tools.DescribeAllTools();

var systemInstructions = """

You are an AI agent. Your goal:

{GOAL}

You have the following tools available:

{TOOLS}

At each step, you must respond ONLY with a JSON object with this shape:

{

"tool": "tool_name_or_empty_if_done",

"arguments": { "key": "value" },

"isDone": true_or_false,

"finalAnswer": "string or null",

"reasoning": "short explanation"

}

""";

var prompt = systemInstructions

.Replace("{GOAL}", context.Goal)

.Replace("{TOOLS}", toolsDescription)

+ "\n\nHistory:\n" + historyText;

return prompt;

}

}

A few important design points:

maxStepsis a safety guardrail to avoid infinite loops.Historyrecords reasoning and tool results, which is invaluable for debugging.BuildPromptembeds:The goal

Tool descriptions

Clear JSON response instructions

The interaction history so the LLM can plan the next step.

7. A fake LLM client to demo the architecture

Before calling a real LLM, we can plug in a fake client that returns hard-coded decisions. This lets you test the agent loop and tools without any external dependency.

In Program.cs, define FakeLLMClient and the entry point:

using System.Text.Json;

using AgenticAiDemo;

public class FakeLLMClient : ILLMClient

{

private int _callCount = 0;

public Task<string> GetAgentDecisionJsonAsync(string prompt)

{

_callCount++;

// For demonstration:

// 1st call: list files

// 2nd call: read a specific file

// 3rd call: write a report and finish

return Task.FromResult(_callCount switch

{

1 => JsonSerializer.Serialize(new AgentToolCall

{

ToolName = "list_files",

Arguments = new Dictionary<string, string> { ["path"] = "." },

IsDone = false,

Reasoning = "First I will list the files in the folder."

}),

2 => JsonSerializer.Serialize(new AgentToolCall

{

ToolName = "read_file",

Arguments = new Dictionary<string, string> { ["path"] = "input.txt" },

IsDone = false,

Reasoning = "Now I will read the main input file."

}),

3 => JsonSerializer.Serialize(new AgentToolCall

{

ToolName = "write_file",

Arguments = new Dictionary<string, string>

{

["path"] = "report.txt",

["content"] = "This is a fake summarized report created by the demo agent."

},

IsDone = true,

FinalAnswer = "I created 'report.txt' with the summary.",

Reasoning = "Task is done."

}),

_ => JsonSerializer.Serialize(new AgentToolCall

{

ToolName = "",

IsDone = true,

FinalAnswer = "No more actions.",

Reasoning = "Reached end of demo."

})

});

}

}

public class Program

{

public static async Task Main(string[] args)

{

Console.WriteLine("=== Agentic AI Demo (.NET Core) ===");

var tools = new ToolRegistry();

tools.Register(new ListFilesTool());

tools.Register(new ReadFileTool());

tools.Register(new WriteFileTool());

ILLMClient llmClient = new FakeLLMClient();

var agent = new Agent(llmClient, tools);

var goal = "Summarize the .txt files in the current folder and write a report.";

var result = await agent.RunAsync(goal, maxSteps: 5);

Console.WriteLine("\n=== Final Result ===");

Console.WriteLine(result);

}

}

Create a simple input.txt in the project folder, run the app, and observe:

The agent “steps” printed to the console

The tools being executed

A

report.txtgenerated by thewrite_filetool

Even though the LLM is fake, you now have a working agent architecture.

8. Swapping in a real LLM

Once the basic architecture is clear, you can replace FakeLLMClient with an implementation that calls a real LLM:

Build the prompt using

BuildPrompt.Call your LLM HTTP API with:

The prompt as a system/user message

A reasonable temperature (e.g., 0.2–0.5 for stability)

Instruct the model clearly:

- “Respond with JSON only, no extra text.”

Parse the response into

AgentToolCall.

For example, an HttpClient-based implementation could look like:

using System.Net.Http.Json;

namespace AgenticAiDemo;

public class RealLLMClient : ILLMClient

{

private readonly HttpClient _http;

public RealLLMClient(HttpClient http)

{

_http = http;

}

public async Task<string> GetAgentDecisionJsonAsync(string prompt)

{

// TODO: implement call to your chosen LLM provider.

// 1. Serialize request body with the prompt.

// 2. Send POST.

// 3. Extract the model's text content.

// 4. Ensure it is valid JSON matching AgentToolCall.

throw new NotImplementedException();

}

}

You can keep the rest of the agent code unchanged.

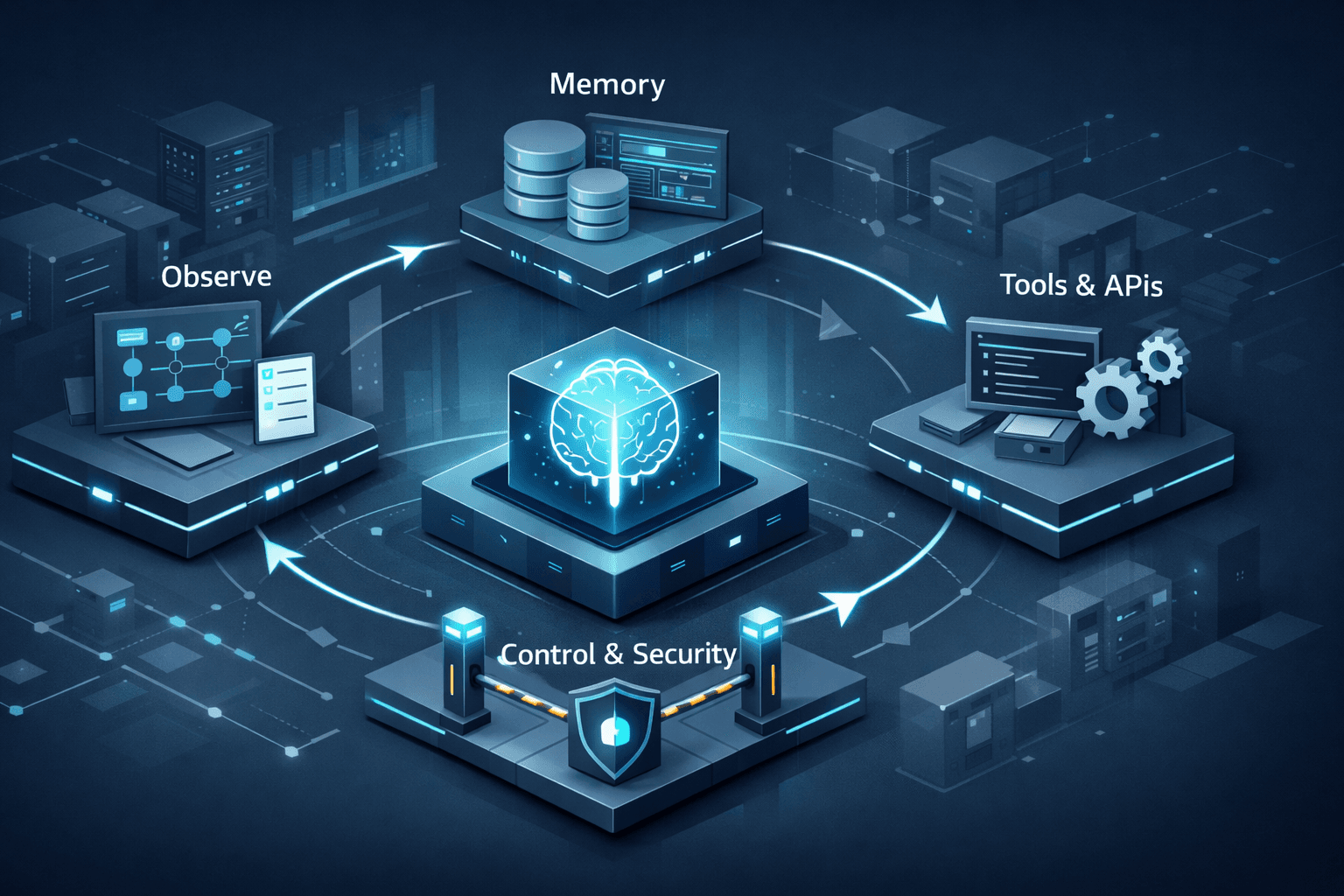

9. Safety, guardrails, and next steps

Even in this small demo, we used a few basic safety patterns:

maxStepsto avoid infinite loopsA tool registry to strictly control what the agent can do

History logging to understand why the agent did something

In a more serious project, you would add:

Confirmation steps before destructive actions (deleting files, sending emails)

Sandboxed environments (test folders, test API keys)

Validation of the tool arguments returned by the model

Monitoring and evaluation of how often the agent succeeds or fails

Next experiments to try:

Add a tool to call an external API and summarize online data

Store past tasks in a database as a simple form of long-term memory

Split responsibilities into planner and executor agents (multi-agent)

Expose the agent as a web API instead of a console app

10. Conclusion

You have built a minimal, but fully structured, Agentic AI in .NET Core:

A clear data model for the agent’s context and decisions

A tool abstraction that lets the agent act on the world safely

An agent loop that uses an LLM to decide what to do next

A demo setup that works with a fake LLM and can later be wired to a real one

From here, you can evolve this into a real project: automate internal workflows, build intelligent back-office processes, or simply explore agentic patterns as a student project.