Designing Production-Grade Agentic AI Systems

Architecture, Guardrails, and Engineering Trade-offs

Introduction

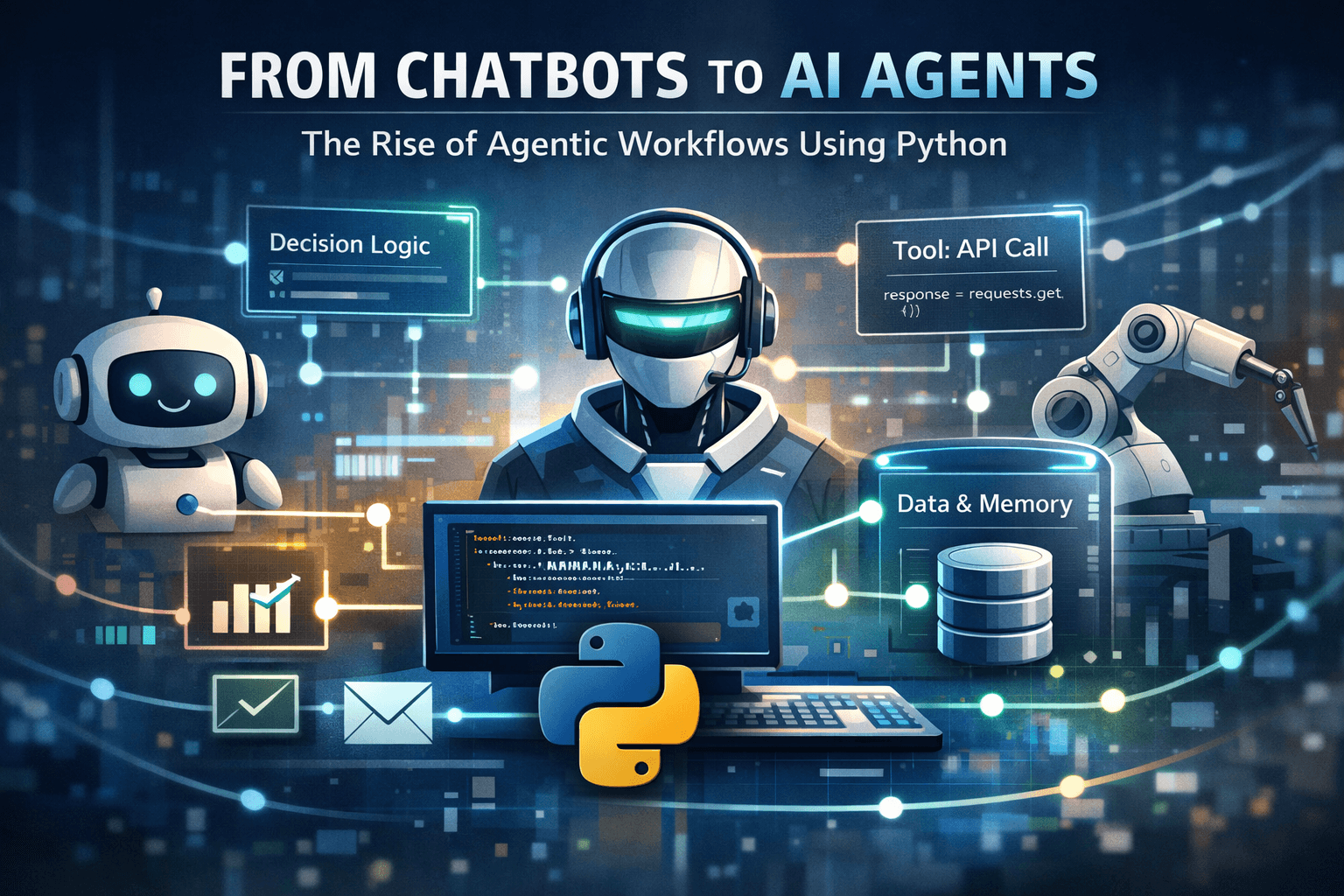

Agentic AI systems represent a shift from prompt–response models toward autonomous, goal-driven systems capable of planning, acting, and adapting over time. While demos often highlight impressive autonomy, moving Agentic AI into production introduces a very different set of engineering challenges: reliability, safety, observability, cost control, and predictable behavior.

This article focuses on how to design Agentic AI systems that are production-ready, not just impressive prototypes. We will cover core architectural patterns, common failure modes, and practical guardrails required to deploy agents responsibly in real-world environments.

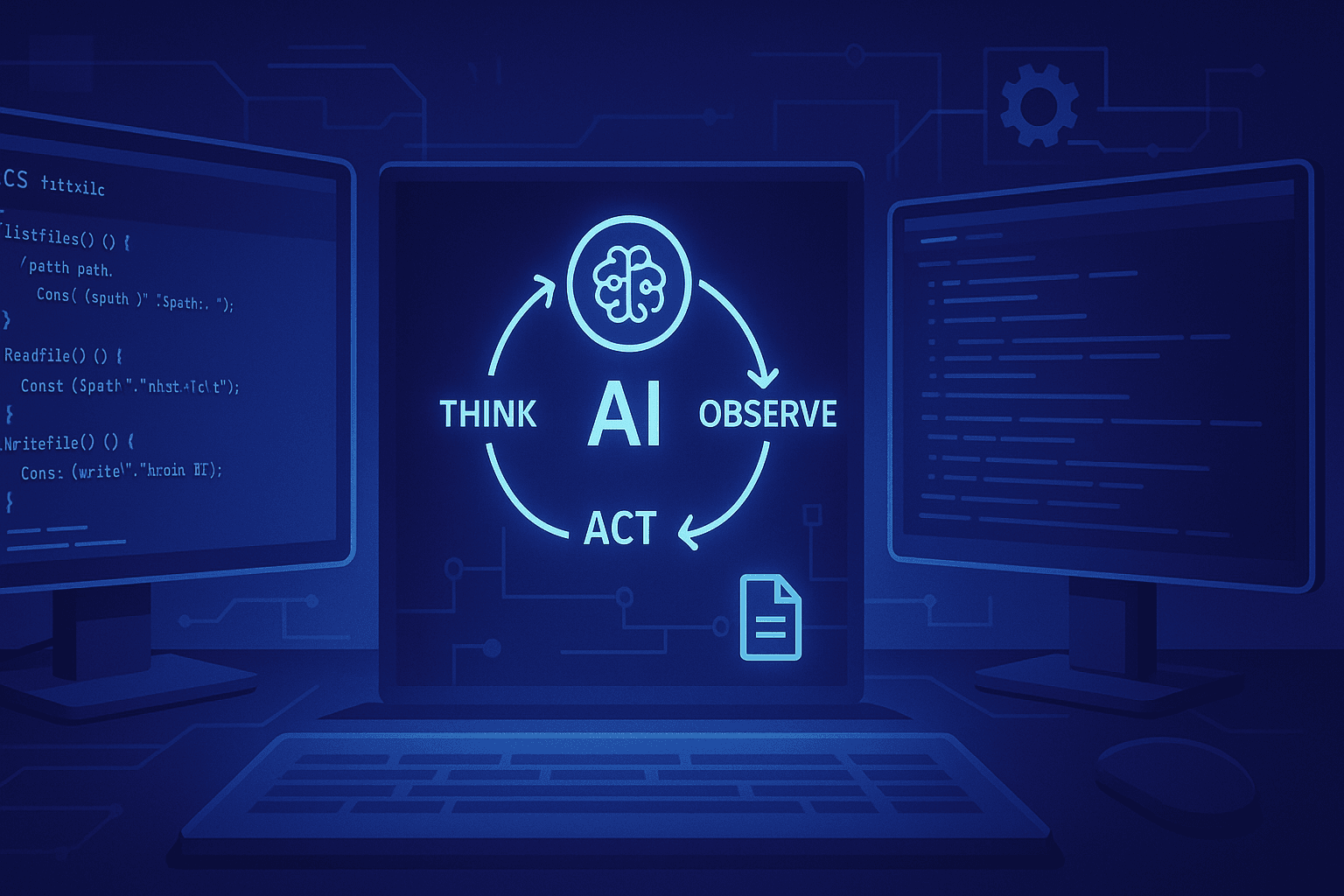

What Makes an AI System “Agentic”?

At a technical level, an Agentic AI system is defined by a closed decision loop rather than a single inference call.

A minimal agent loop looks like:

Observe – Gather state from the environment, user input, or tools

Plan – Decide what to do next to move toward a goal

Act – Execute actions via tools or APIs

Reflect – Evaluate outcomes and update internal state

What differentiates agentic systems from traditional AI pipelines is:

Persistence of state

Multi-step reasoning

Tool invocation

Autonomy over execution order

These properties are precisely what make agentic systems powerful—and risky.

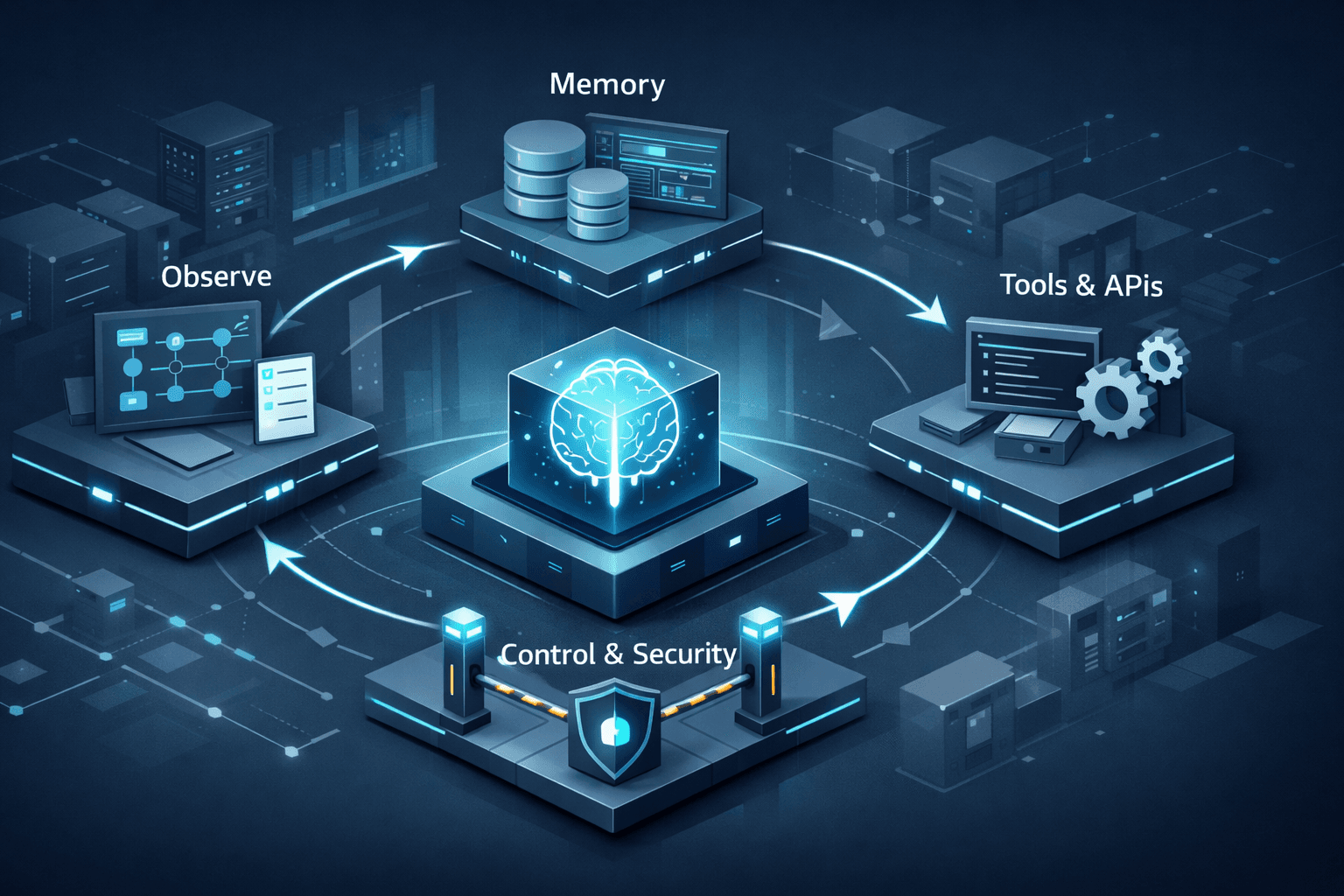

Core Architecture of a Production Agent

1. Agent Core (Decision Engine)

The agent core is responsible for:

Interpreting goals

Reasoning over context

Selecting the next action

Production considerations:

Avoid unbounded reasoning loops

Enforce step limits and timeouts

Prefer structured planning outputs (JSON plans, action graphs)

Key trade-off:

More autonomy increases capability but reduces predictability.

High-Level Agentic AI Architecture Diagram

System View

+---------------------+

| User / Event |

+----------+----------+

|

v

+---------------------+

| Agent Controller | <-- lifecycle, limits, retries

+----------+----------+

|

v

+---------------------+

| Agent Core |

| (Reason + Decide) |

+----------+----------+

|

+-----+-----+

| |

v v

+---------+ +-------------+

| Planner | | Memory Mgr |

+----+----+ +------+------+

| |

v v

+----------------------------+

| Tool Layer |

| (APIs, Services, Actions) |

+-------------+--------------+

|

v

+------------------+

| External Systems |

+------------------+

Key Design Insight

Agent Core should never directly call tools

All execution flows through a Controller layer

Memory and planning are subsystems, not prompts

2. State and Memory Management

Memory is essential for agent continuity, but unmanaged memory quickly becomes a liability.

Common memory types:

Short-term memory: Current task context

Long-term memory: Historical interactions, summaries

Episodic memory: Task-level traces for replay and debugging

Production patterns:

Explicit memory schemas

Memory expiration and summarization

Separation of “decision memory” and “audit memory”

Anti-pattern:

Dumping raw conversation history back into the model indefinitely.

Memory Architecture Diagram

+-----------------------+

| Short-Term Memory |

| (current task state) |

+-----------+-----------+

|

v

+-----------------------+

| Working Summary |

| (compressed context) |

+-----------+-----------+

|

v

+-----------------------+

| Long-Term Memory |

| (vector / structured) |

+-----------+-----------+

|

v

+-----------------------+

| Audit & Replay Log |

| (immutable, append) |

+-----------------------+

Production Rule

Decision memory ≠ Audit memory

Never optimize audit logs for token cost.

3. Planning Layer

Planning determines how the agent decomposes a goal.

Common approaches:

ReAct-style reasoning (interleaved thought/action)

Plan-and-execute (explicit plan first, then execution)

Tree-based planning (for complex decision spaces)

Production guidance:

Prefer bounded, explicit plans

Validate plans before execution

Treat planning as fallible input, not ground truth

4. Tooling and Action Interfaces

Tools are where agents interact with real systems—and where most failures occur.

Design principles:

Strict input/output schemas

Idempotent operations

Permission-scoped tools

Sandboxed execution environments

Example:

An agent should never have unrestricted access to production databases or deployment systems.

Critical insight:

Tools are APIs. Design them with the same rigor as any external interface.

5. Control Layer (Guardrails)

No production agent should operate without control mechanisms.

Essential guardrails:

Maximum step counts

Budget and token limits

Action allowlists

Human-in-the-loop checkpoints for high-risk actions

Fail-safe design:

When in doubt, agents should defer, escalate, or stop, not improvise.

Common Failure Modes (and How to Prevent Them)

1. Goal Drift

The agent gradually deviates from its original objective.

Mitigation:

Re-anchor goals at each iteration

Use immutable goal definitions

Validate actions against the original intent

2. Infinite or Degenerate Loops

Agents repeat reasoning or tool calls endlessly.

Mitigation:

Step counters

Loop detection via state hashes

Explicit “no-progress” termination rules

3. Tool Misuse

Agents call tools in unintended or unsafe ways.

Mitigation:

Strict tool contracts

Input validation outside the model

Execution dry-runs for destructive actions

4. Non-Deterministic Debugging

Reproducing failures becomes nearly impossible.

Mitigation:

Full decision traces

Deterministic replays (fixed seeds, stored prompts)

Versioned prompts and tool schemas

Observability and Debugging

Observability is not optional for agentic systems.

What to log:

Agent decisions and plans

Tool calls and responses

Token usage and latency

Failure and fallback paths

Advanced practices:

Agent trace visualizations

Simulation environments for replay

Shadow runs for new agent versions

If you cannot explain why an agent acted, you should not deploy it.

Evaluation and Testing

Traditional accuracy metrics do not apply cleanly to agents.

Recommended evaluation layers:

Unit tests for tools

Simulation-based task completion

Regression testing on known scenarios

Cost and latency benchmarking

Key metric shift:

From “correct answer” to “acceptable outcome under constraints.”

Build vs Buy: Frameworks Are Not Architectures

Agent frameworks (e.g., LangGraph, CrewAI, Auto-GPT variants) can accelerate development but do not replace architectural responsibility.

Use frameworks for:

Orchestration scaffolding

Experimentation

Do not outsource:

Safety decisions

Tool permissions

Memory governance

Production controls

Final Design Principles

To summarize, production-grade Agentic AI systems should follow these principles:

Bound autonomy aggressively

Make state explicit and auditable

Treat tools as critical infrastructure

Design for failure, not success

Prefer controllability over cleverness

Agentic AI is not about building smarter models—it is about building trustworthy systems around them.

Conclusion

Agentic AI unlocks powerful new capabilities, but only when engineered with discipline. The leap from prototype to production requires rethinking architecture, safety, and operational practices.

Teams that succeed will be those who treat Agentic AI not as magic, but as distributed systems with probabilistic components—systems that demand the same rigor as any production platform.