From Chatbots to Agents: Why 2026 Is the Year of Agentic Workflows

Agentic workflows are replacing chatbots. Learn how to design, build, and ship production-ready AI agents in Python before 2026.

If you are a Python engineer or an engineering leader, chances are you’ve already shipped at least one generative AI feature: a support chatbot, a Q&A bot over docs, or an internal helper sitting in Slack.

Those were the warm-up.

The next phase is agentic workflows: Python-based AI agents with tools, memory, and guardrails that actually execute business processes end-to-end, not just answer questions.

By 2026, the organizations that win with AI will be the ones whose core workflows—support, onboarding, collections, ops—are run by agents, with humans supervising and handling edge cases. This post is a roadmap for getting there with a Python stack.

We will cover:

A clear definition of agentic workflows for Python engineers

How agentic systems differ from chatbots and simple RAG bots

A reference Python architecture for agents

Concrete code snippets for tools, agent loops, and observability

A 12-month plan to go from “chat demo” to production AI agents

What is an “agentic workflow”? (Python engineer’s definition)

From a Python perspective, an AI agent is basically a loop over:

Goal + context as input

Call to an LLM with tools / function-calling

Tool execution (Python functions, HTTP clients, DB calls)

State updates (in a DB or cache)

Stop when the goal is reached, or escalate

An agentic workflow is a repeatable process implemented as one or more agents orchestrating those tools to deliver an outcome (ticket resolved, invoice reconciled, lead qualified), not just a text answer.

You can think of an agent as a “soft” microservice where some of the decision logic lives in an LLM, but everything around it (APIs, data, policies, logging) is regular Python.

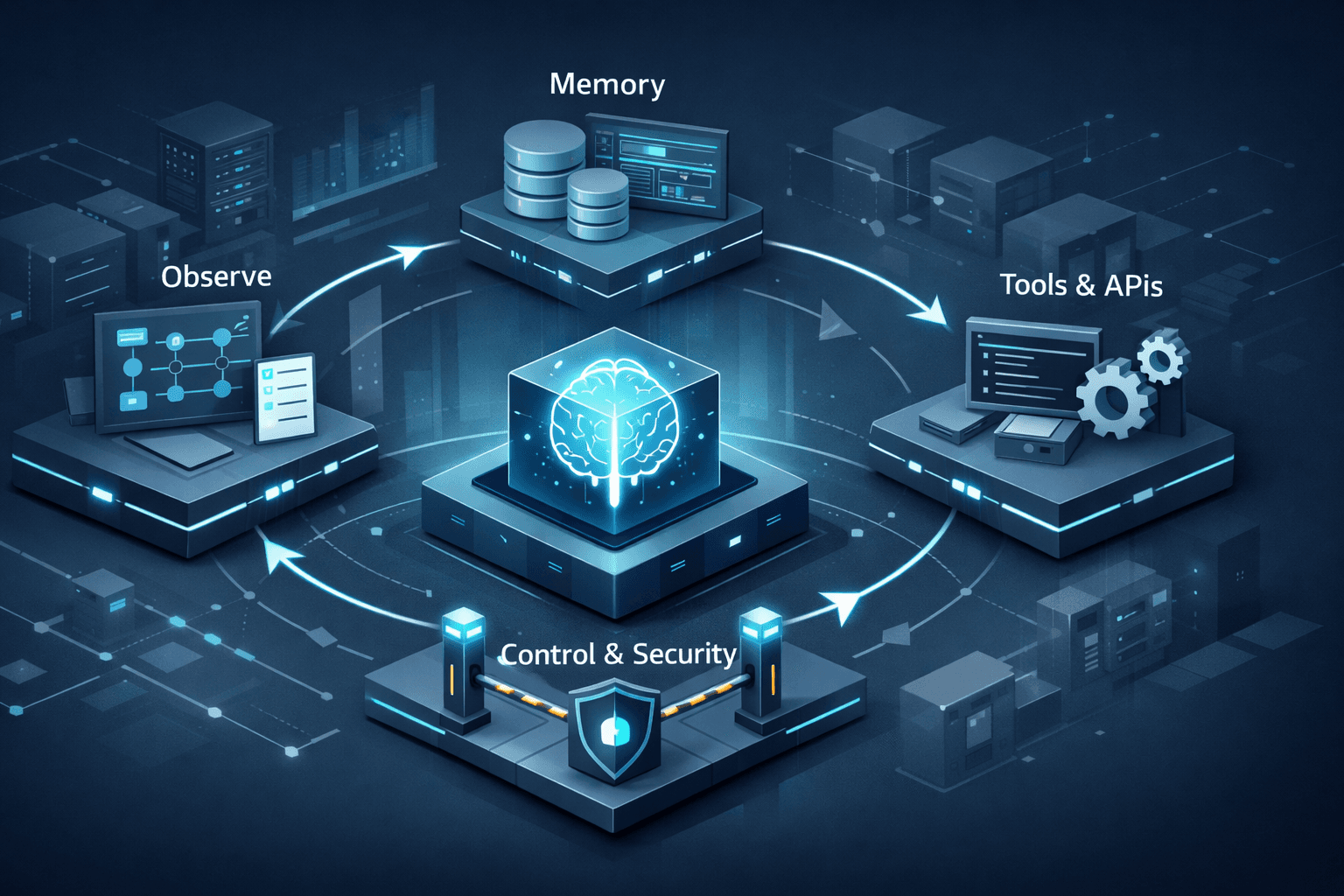

Typical components in a Python-based agentic workflow:

LLM client (OpenAI, Anthropic, etc.) with function/tool calling

Tool layer implemented in Python (Pydantic models, requests/httpx, SQLAlchemy, etc.)

Orchestrator running in something like FastAPI / Celery / worker processes

State store (PostgreSQL, Redis, vector DB, S3, etc.)

Guardrails implemented as Python checks, policies, and validators

Observability via logging, traces, and metrics

Chatbots vs agentic workflows: what changes in code

1. Request–response vs goal-oriented loops

A classic chatbot is usually a single HTTP handler, stateless except for a bit of session history:

# classic chatbot in FastAPI (simplified)

from fastapi import FastAPI

from pydantic import BaseModel

from llm_client import chat_completion # your LLM wrapper

app = FastAPI()

class ChatRequest(BaseModel):

user_id: str

message: str

history: list[str] = []

@app.post("/chat")

async def chat(req: ChatRequest):

messages = [{"role": "user", "content": m} for m in req.history + [req.message]]

answer = await chat_completion(messages)

return {"answer": answer}

An agentic workflow endpoint typically takes a goal, kicks off an execution, and runs a loop:

# agentic workflow entrypoint

from fastapi import FastAPI

from pydantic import BaseModel

from uuid import uuid4

from agent_runtime import run_support_workflow # your agent loop

app = FastAPI()

class ResolveTicketRequest(BaseModel):

ticket_id: str

user_id: str

@app.post("/workflows/support/resolve")

async def resolve_ticket(req: ResolveTicketRequest):

execution_id = str(uuid4())

result = await run_support_workflow(execution_id, req.ticket_id, req.user_id)

return {

"execution_id": execution_id,

"status": result.status,

"resolution": result.resolution,

}

Key difference: we treat “resolve this ticket” as a workflow execution, not a single chat turn.

2. Answer engine vs action engine

Chatbots return text. Agents call Python tools that hit APIs, query databases, and mutate state.

Define tools as structured functions:

from pydantic import BaseModel

from typing import Any

# tool input/output schemas

class GetOrderInput(BaseModel):

order_id: str

class Order(BaseModel):

id: str

status: str

amount: float

currency: str

class IssueRefundInput(BaseModel):

order_id: str

amount: float

# tool registry

async def get_order(args: GetOrderInput) -> Order:

# e.g. load from Postgres via SQLAlchemy

order_row = await db.fetch_one(

"SELECT id, status, amount, currency FROM orders WHERE id = :id",

{"id": args.order_id},

)

return Order(**order_row)

async def issue_refund(args: IssueRefundInput) -> dict[str, Any]:

# call payments API with httpx

resp = await payments_client.post(

"/refunds",

json={"order_id": args.order_id, "amount": args.amount},

)

resp.raise_for_status()

return resp.json()

TOOLS = {

"get_order": {

"schema": GetOrderInput,

"handler": get_order,

},

"issue_refund": {

"schema": IssueRefundInput,

"handler": issue_refund,

},

}

Then you expose these as functions in your LLM call (function calling / tools), and the agent loop decides when to call get_order or issue_refund.

3. Prompt history vs explicit state and memory

Instead of stuffing everything into a prompt, model workflow state explicitly in your DB.

Example state model:

from pydantic import BaseModel

from typing import Any, Literal

class WorkflowStatus(str):

RUNNING = "running"

SUCCEEDED = "succeeded"

FAILED = "failed"

class WorkflowExecution(BaseModel):

id: str

type: str # e.g. "support_resolve"

status: WorkflowStatus

input: dict[str, Any]

state: dict[str, Any]

events: list[dict[str, Any]]

You can store this as JSON in Postgres. Each agent step updates state and appends to events (tool calls, decisions, messages). That gives you replay and debugging “for free” compared to opaque prompts.

4. Single model vs multi-agent Python services

In Python, multi-agent usually means:

A router agent (fast, cheap) to pick the correct workflow or specialist

One or more specialist agents (billing, shipping, support, etc.)

An orchestrator that coordinates them

Simple orchestrator sketch:

from router_agent import classify_intent

from agents import billing_agent, shipping_agent, support_agent

async def orchestrate(goal: str, context: dict) -> dict:

intent = await classify_intent(goal, context)

if intent == "billing":

return await billing_agent.execute(goal, context)

elif intent == "shipping":

return await shipping_agent.execute(goal, context)

else:

return await support_agent.execute(goal, context)

You can back each agent with its own LLM config, tools, and policies.

5. UX metrics vs backend metrics

In Python backends, we’re used to SLOs and SLI dashboards. Treat agentic workflows the same way:

Track, per workflow:

success_rateescalation_ratep95_cycle_timecost_per_execution(tokens + infra + human-minutes)policy_violation_count

You can expose these with Prometheus / OpenTelemetry and use Grafana for dashboards.

A Python reference architecture for agentic workflows

Here’s a practical Python architecture you can implement incrementally:

FastAPI (or Django/Flask)

- API gateway for workflow triggers and human review UIs

Agent runtime module

Python package that implements the agent loop(s)

Wraps your LLM provider’s Python SDK

Tool layer package

Pydantic models for inputs/outputs

SQLAlchemy or async ORM for DB operations

httpx/requestsclients for external servicesCentral registry of tools + permissions

State store

PostgreSQL for workflow executions, events, and config

Optional Redis for short-lived session cache

Optional vector DB (e.g., pgvector, Qdrant) for retrieval

Guardrails module

Policy checks implemented as Python functions

Validations on tool arguments and high-risk actions

Redaction and PII handling

Worker layer (Celery / RQ / custom)

For longer-running workflows and background processing

LLM calls and tool calls processed off the main request thread

Observability

structlogorloggingwith JSON logsOpenTelemetry traces for each agent run

Metrics export (Prometheus, StatsD, etc.)

Example: A Python agentic support workflow

Let’s put this together in a minimal example for “resolve Level-1 tickets”.

1. Define tools

# tools/support_tools.py

from pydantic import BaseModel

from typing import Any

class GetTicketInput(BaseModel):

ticket_id: str

class Ticket(BaseModel):

id: str

subject: str

body: str

status: str

customer_id: str

async def get_ticket(args: GetTicketInput) -> Ticket:

row = await db.fetch_one(

"SELECT * FROM tickets WHERE id = :id",

{"id": args.ticket_id},

)

return Ticket(**row)

class UpdateTicketInput(BaseModel):

ticket_id: str

status: str

answer: str

async def update_ticket(args: UpdateTicketInput) -> dict[str, Any]:

await db.execute(

"""

UPDATE tickets

SET status = :status, answer = :answer

WHERE id = :id

""",

{

"id": args.ticket_id,

"status": args.status,

"answer": args.answer,

},

)

return {"ok": True}

class EscalateInput(BaseModel):

ticket_id: str

reason: str

async def escalate_to_human(args: EscalateInput) -> dict[str, Any]:

# mark ticket as "needs_human" and notify

await db.execute(

"""

UPDATE tickets

SET status = 'needs_human', escalation_reason = :reason

WHERE id = :id

""",

{"id": args.ticket_id, "reason": args.reason},

)

return {"ok": True}

SUPPORT_TOOLS = {

"get_ticket": {"schema": GetTicketInput, "handler": get_ticket},

"update_ticket": {"schema": UpdateTicketInput, "handler": update_ticket},

"escalate_to_human": {"schema": EscalateInput, "handler": escalate_to_human},

}

2. Implement the agent loop

# agent_runtime/support_agent.py

from typing import Any

from llm_client import call_llm_with_tools

from tools.support_tools import SUPPORT_TOOLS

from state_store import load_execution, save_execution, init_execution

MAX_STEPS = 10

async def run_support_workflow(execution_id: str, ticket_id: str, user_id: str):

execution = await load_execution(execution_id)

if execution is None:

execution = await init_execution(

execution_id,

type="support_resolve",

input={"ticket_id": ticket_id, "user_id": user_id},

)

steps = execution.state.get("steps", 0)

while steps < MAX_STEPS and not execution.state.get("done", False):

observation = {

"input": execution.input,

"events": execution.events[-5:], # last few events for context

}

# call LLM with tool definitions (names + schemas)

decision = await call_llm_with_tools(

observation=observation,

tools=SUPPORT_TOOLS,

)

if decision["type"] == "tool_call":

tool_name = decision["tool_name"]

tool_args = decision["arguments"]

tool = SUPPORT_TOOLS[tool_name]

args_model = tool["schema"](**tool_args)

result = await tool["handler"](args_model)

execution.events.append(

{

"type": "tool_call",

"tool": tool_name,

"args": tool_args,

"result": result,

}

)

elif decision["type"] == "final_answer":

# final answer to customer + ticket closure

answer = decision["answer"]

from tools.support_tools import UpdateTicketInput, update_ticket

await update_ticket(

UpdateTicketInput(

ticket_id=ticket_id,

status="resolved",

answer=answer,

)

)

execution.state["done"] = True

execution.state["resolution"] = answer

elif decision["type"] == "escalate":

from tools.support_tools import EscalateInput, escalate_to_human

await escalate_to_human(

EscalateInput(

ticket_id=ticket_id,

reason=decision.get("reason", "unspecified"),

)

)

execution.state["done"] = True

execution.state["resolution"] = "escalated"

steps += 1

execution.state["steps"] = steps

await save_execution(execution)

if not execution.state.get("done", False):

# safety net: escalate if loop ended without resolution

from tools.support_tools import EscalateInput, escalate_to_human

await escalate_to_human(

EscalateInput(

ticket_id=ticket_id,

reason="max_steps_reached",

)

)

execution.state["done"] = True

execution.state["resolution"] = "escalated_max_steps"

await save_execution(execution)

return execution

This is intentionally simplified, but it shows the core ideas:

Pydantic for tool schemas

A loop guarded by

MAX_STEPSAll decisions and tool calls logged to

execution.eventsSafe fallback to escalation

In a real system you would also:

Separate proposal mode (human approval required) vs autonomous mode

Enforce policy checks before executing high-risk tools

Add structured logging and tracing around every step

Testing and evaluating Python agents

Beyond unit tests, you’ll want:

Golden test cases: small JSON fixtures with input + expected pattern of actions

Offline eval scripts: Python scripts that iterate over test cases and compute success/failure metrics for a given model/prompt/version

Replay tools: Python CLI or web UI to replay a problematic execution step-by-step using stored state and events

Because everything is Python + JSON, you can lean heavily on your existing test stack: pytest, fixtures, CI pipelines, and static analysis.

A 12-month roadmap for a Python-based org

Short version tailored to a Python backend:

Quarter 1–2

Pick 1–2 workflows (e.g., specific support queue, internal IT requests)

Build a clean tool layer as Python packages with Pydantic models

Stand up an agent runtime and state tables in Postgres

Ship first workflow in copilot mode behind FastAPI endpoints

Log everything; build a simple replay script

Quarter 3–4

Add more workflows (billing, collections, onboarding)

Introduce router + specialist agents

Implement guardrails as Python policy checks around tools

Add metrics and traces; integrate with your existing monitoring stack

Allow partial autonomy for low-risk segments based on metrics

After 12 months

Treat “workflows” as products with owners and roadmaps

Standardize an internal Python agent framework (base classes, utilities, templates)

Start experimenting with outcome-based metrics (e.g., tickets resolved per day, days-sales-outstanding, time-to-onboard)

Closing thoughts

For Python teams, the move from chatbots to agentic workflows is not a new language or framework; it is a change in how we structure backend systems:

LLMs become controllers inside Python workflows

Tools are just typed functions with business logic

Workflows become goal-oriented processes, not just HTTP endpoints

If you have FastAPI, Postgres, a message queue, and a preferred LLM provider, you already have 80% of what you need. The remaining 20% is:

A clean tool layer

A small, robust agent runtime

Good evals and observability

Start with one workflow, do it properly, and you will be in a strong position when “Which of your workflows are agentic?” becomes a standard question in 2026.