AI in Robotics & Bioengineering

Where Algorithms Meet Atoms, Nerves, and Scalpels

Executive takeaways

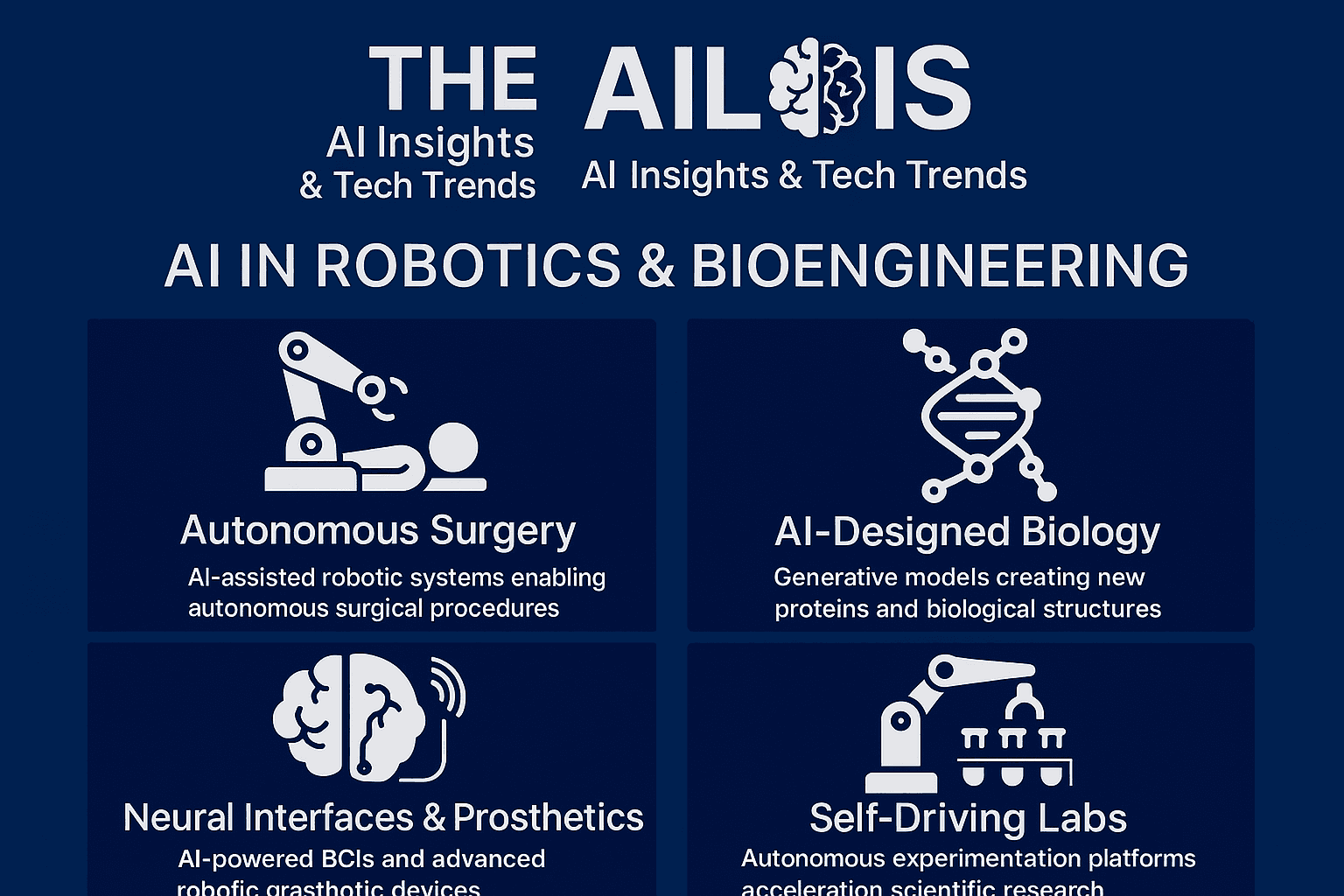

AI is an amplifier across physical domains. It’s catalyzing advances in surgery, prosthetics/BCI, lab automation, and bio‑design—not as a standalone “app,” but as an engine that turns data into actions in the real world.

Protein design just crossed a historic threshold. AlphaFold‑enabled structure prediction and generative design are shortening the path from sequence to function, with concrete wins in biotherapeutics and global health preparedness.

Surgery is moving from teleoperation to autonomy. Vision‑language models and reinforcement learning are enabling robots to execute multi‑step surgical subtasks on benchtop and ex vivo models, hinting at future “shared autonomy” in the OR.

Neural interfaces are reaching natural conversational speed. AI decoders now synthesize expressive speech from neural activity in near‑real time, while “AI copilots” raise BCI task performance, pointing to more capable prosthetics and assistive robotics.

Self‑driving labs are compounding discovery. Autonomous experimentation platforms are delivering 10× data throughput and tighter, greener loops between hypothesis and validation.

Regulation is catching up. The FDA’s 2025 draft guidance and the EU AI Act add lifecycle, transparency, and high‑risk obligations for AI‑enabled medical devices—critical for surgical robots, diagnostics, BCIs, and AI‑designed biologics.

1) AI‑designed biology: from structure to function

Why it matters. In protein science, AI has made an historic pivot from “seeing” (predicting structures) to “shaping” (designing new proteins). AlphaFold and related models broke open structure prediction; the 2024 Nobel in Chemistry recognized that impact—marking a rare moment where AI is formally credited for transforming a core scientific discipline.

The new workflow. Modern protein design stacks blend diffusion‑based backbone generation (e.g., RFdiffusion), sequence optimization (ProteinMPNN), and structure validation (AlphaFold) in high‑throughput loops, supported by emerging tools (e.g., Afpdb) that streamline structure manipulation and QC for thousands of designs.

From pandemics to precision biologics. Expert commentary in global health argues AI biodesign should be treated as a strategic capability: within hours of sequencing a novel pathogen, AI can model target structures, prioritize epitopes, and accelerate countermeasure design—if paired with responsible access and guardrails.

Therapeutics are following. Reviews and case studies show AI accelerating antibody discovery—de‑risking sequence libraries, improving developability, and enabling de novo binders to tough targets; big‑pharma collaborations are validating the economics at scale.

Editorial note: Computational wins still require wet‑lab confirmation. That’s where robotics—and “self‑driving” labs—come in.

2) Autonomy in the operating room: robotics grows “hands and eyes”

From teleoperation to shared autonomy. Conventional surgical robots (like da Vinci) are teleoperated; outcomes vary with human skill and fatigue. A new wave links vision‑language models and reinforcement learning to surgical platforms, enabling robots to interpret video, imitate expert maneuvers, and execute suturing and tissue manipulation autonomously in controlled settings.

Why this is hard. The OR is dynamic: variable tissue, bleeding, motion, light. Recent Science Robotics reviews detail the stack required—visual parsing, depth estimation, policy learning, and tight visual servoing—to generalize across instruments and anatomies, with early live‑animal validations now reported.

Near‑term path: assistive autonomy. Expect “copilot” features (camera auto‑framing, smart stapling, safety stops) to arrive first, improving consistency without removing the surgeon. Ambulatory surgery centers are already adopting robots for selected cases as economics and training models evolve.

Safety and standards. As robots move closer to humans (and even into humanoid forms for logistics and support), standards bodies are pushing new stability, privacy, and psychosocial risk frameworks specific to human‑scale mobile robots.

3) Neural interfaces, prosthetics, and exoskeletons: restoring motion and voice

Expressive speech from thought. In 2025, two independent teams reported BCIs that decode attempted speech and synthesize audio with intonation in near‑real time, reaching conversational speeds and even singing—moving assistive communication beyond slow letter‑by‑letter text.

AI copilots for BCIs. A complementary approach adds “shared autonomy”: AI copilots infer the user’s goal from neural signals plus task context and computer vision, then assist control of cursors or robotic arms—multiplying performance and success rates.

Toward everyday use. Non‑invasive BCIs are also improving via AI‑enhanced signal processing, potentially broadening access beyond implant recipients, while perspectives in wearable robotics emphasize multimodal sensing, human‑in‑the‑loop control, and neural interfaces to make exoskeletons and prostheses feel embodied, not foreign.

Rehab gets smarter. Pairing robotics with closed‑loop spinal cord neuromodulation is showing promise to augment gait rehabilitation, producing better muscle activation patterns during robot‑assisted walking and cycling in early studies.

4) Autonomous experimentation: the rise of the self‑driving lab

What it is. Self‑driving labs (SDLs) couple robots, inline analytics, and AI optimizers to run closed‑loop experiments—automating hypothesis → experiment → analysis → next‑experiment. Policy and technical reviews stress both the upside (speed, reproducibility) and the need for governance on IP, safety, and workforce impacts.

The throughput jump. A 2025 advance in dynamic‑flow SDLs increased data collection ≥10× vs. steady‑state approaches by continuously varying reaction conditions and reading outputs in real time—cutting solvents, idle time, and cost.

Why bioengineering benefits. Bioprocess optimization (media, feed, induction), enzyme evolution, and formulation science are natural fits for SDLs; public analyses urge national‑scale investments to turn AI proposals into validated materials and molecules faster.

5) Microrobots and targeted delivery: from benches to bodies

Directed payloads. Magnetic and biohybrid microrobots are being steered through complex geometries (e.g., intestines, joints) to deliver drugs locally, aiming to improve the tiny fraction of systemic drugs that actually reach target tissue. A 2025 demonstration combined catheter delivery, magnetic guidance, and gel‑based payloads with retrieval after release—an important step for clinical viability.

State of the field. Reviews catalog propulsion modes, guidance strategies, and release mechanisms, but emphasize translational hurdles: biocompatibility, imaging, real‑time control in vivo, and manufacturing at scale.

6) Risk, regulation, and responsible translation

United States (FDA). In January 2025 the FDA issued draft guidance covering lifecycle management and marketing submissions for AI‑enabled device software functions, with recommendations on risk, bias, and post‑market performance monitoring; it complements the agency’s transparency principles for ML medical devices and its living list of authorized AI‑enabled devices.

Performance drift & real‑world use. The FDA is also seeking public input on measuring real‑world performance and managing model drift once devices are deployed—central to BCIs, surgical automation, and diagnostic AI.

European Union (AI Act). The EU’s AI Act (Regulation (EU) 2024/1689) is in force with phased obligations through 2027. Medical AI that is a safety component of an MDR/IVDR device is generally deemed high‑risk, requiring conformity assessment, risk management, data governance, and human oversight; the Commission’s 2025 guidance clarifies what counts as an “AI system.”

Biosecurity and dual‑use. Leading voices in global health policy urge proactive guardrails for AI biodesign—balancing speed against misuse risks, and expanding equitable access to algorithms and compute with safety by design.

7) How to evaluate opportunities (and hype) in 2025

Ask these questions:

Does the AI act on the world—or just predict? Prioritize use cases where models close the loop with sensors and actuators (robots, pumps, electrodes), not just dashboards.

What’s the validation path? For AI biodesign: is there a wet‑lab loop (SDL, CRO, internal platform) and appropriate in vivo follow‑up? For surgical autonomy: are there benchtop, ex vivo, and animal validations along a clear safety case?

How will it be regulated? Map the product to FDA pathways (including SaMD) and EU AI Act risk categories early; plan for post‑market monitoring and transparency obligations.

Where is the human in the loop? Shared autonomy (surgeon copilots, BCI copilots) is a pragmatic middle ground that often yields earlier clinical wins.

Can it scale safely? For microrobots and wearables, probe manufacturability, retrieval, long‑term biocompatibility, and real‑time imaging/control.

8) Roadmap: building with AI across robotics & bioengineering

Launch a design→build→test loop for biologics. Pair a protein‑design stack (RFdiffusion/ProteinMPNN/AlphaFold) with a bench or SDL partner; track cycle time, hit rates, and developability metrics.

Pilot assistive autonomy in the OR. Start with vision‑AI that automates camera control and instrument tracking; collect systematic data to justify expanded autonomy under surgeon oversight.

Prototype AI‑BCI copilots for assistive devices. Combine neural decoding with goal‑inference and shared control to boost user success on everyday tasks before scaling hardware complexity.

Evaluate targeted delivery concepts with retrievability. Favor microrobot designs demonstrated with guidance, payload release, and magnet retrieval in complex geometries.

Operationalize compliance early. Stand up documentation and monitoring aligned to FDA draft guidance and EU AI Act high‑risk requirements; treat transparency and drift detection as product features, not afterthoughts.

Closing thought

AI’s greatest contributions in 2025 aren’t just smarter predictions—they’re safer, faster actions: stitching tissue, restoring a voice, evolving a protein, or running a week’s worth of experiments before lunch. The winners will be those who connect models to mechanisms—with rigorous validation, thoughtful oversight, and a relentless focus on patient benefit.