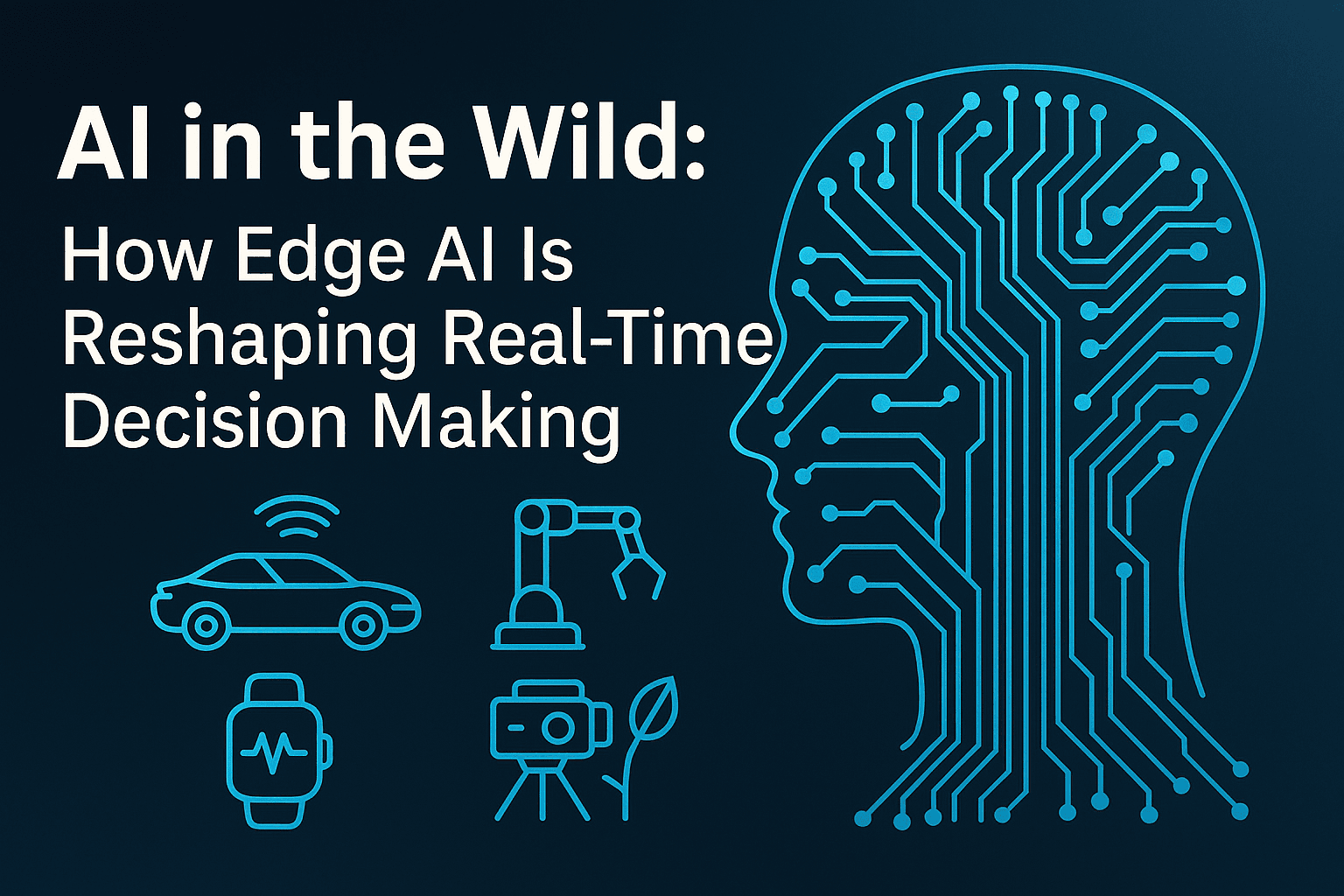

AI in the Wild

How Edge AI Is Reshaping Real-Time Decision Making

Introduction

The traditional AI pipeline—where data is collected at the edge, transmitted to centralized cloud servers, processed, and then returned—has reached its limits in latency-sensitive, bandwidth-constrained, and privacy-critical environments. Enter Edge AI, a paradigm shift that enables machine learning inference directly on edge devices such as microcontrollers, embedded systems, and mobile hardware.

Edge AI leverages optimized models, hardware accelerators, and lightweight frameworks to perform real-time computation locally. This eliminates the round-trip latency of cloud communication, reduces dependency on network availability, and enhances data sovereignty by keeping sensitive information on-device.

From autonomous drones to industrial sensors, Edge AI is redefining how intelligence is deployed and scaled. In this article, we’ll explore its architecture, applications, challenges, and implications.

What Is Edge AI?

Edge AI refers to the deployment of AI models directly on edge devices—hardware located close to the data source. These devices include microcontrollers, smartphones, embedded systems, and smart cameras.

Core Components

Inference Engines: TensorFlow Lite, ONNX Runtime, PyTorch Mobile

Hardware Accelerators: Google Coral TPU, NVIDIA Jetson, Apple ANE

Optimization Techniques: Quantization, Pruning, Knowledge Distillation

Local Processing: Reduces bandwidth and enhances responsiveness

Why It Matters

Latency Reduction

Bandwidth Efficiency

Privacy Preservation

Scalability

Real-World Applications

1. Autonomous Systems

Real-time navigation in self-driving cars and drones

Onboard vision models for obstacle avoidance

2. Smart Manufacturing

Predictive maintenance via vibration sensors

Real-time defect detection on production lines

3. Healthcare and Wearables

On-device anomaly detection in wearables

Portable diagnostic tools for remote areas

4. Environmental Monitoring

Smart camera traps for species recognition

Edge-enabled irrigation and pest detection in agriculture

5. Retail and Smart Spaces

Footfall analysis and theft detection via smart cameras

Personalized customer interaction at kiosks

Technical Challenges

1. Model Optimization

- Quantization (INT8), Pruning, NAS, Distillation

2. Hardware Heterogeneity

Cross-platform deployment

Hardware-specific tuning

3. Privacy and Security

Secure boot, encrypted inference

Federated learning for decentralized training

4. Real-Time Constraints

Latency budgets, power-aware scheduling

Thermal management

5. Deployment and Maintenance

OTA updates, model versioning

Lightweight telemetry and monitoring

Emerging Trends

1. Federated Learning

Collaborative training without data centralization

Challenges: non-IID data, secure aggregation

2. 5G and IoT Integration

Low-latency coordination between edge nodes

MEC for hybrid edge-cloud workloads

3. TinyML

ML on microcontrollers (<1mW)

Use cases: keyword spotting, gesture recognition

4. Privacy-Preserving AI

Differential privacy, homomorphic encryption

Secure enclaves for inference

5. Edge-Cloud Synergy

Dynamic partitioning of workloads

Adaptive performance tuning

6. AutoML for Edge

Hardware-aware model generation

Tools: Edge TPU Compiler, NNI, Meta NAS

Ethical and Societal Implications

1. Accountability

- Who is responsible for autonomous decisions?

2. Data Ownership

- Consent and transparency in edge environments

3. Bias and Fairness

Contextual bias in edge deployments

Lack of feedback loops

4. Security Risks

Physical tampering, adversarial attacks

Need for runtime integrity

5. Environmental Impact

E-waste and energy consumption

Sustainable design practices

6. Digital Divide

Accessibility in underserved regions

Inclusive deployment strategies

Case Study: Wildlife Monitoring with Edge AI

Overview

Smart camera traps with embedded CNNs are revolutionizing biodiversity tracking.

Technical Highlights

Quantized models on ARM Cortex-M

Event filtering and real-time alerts

Long battery life and offline operation

Impact

Faster insights for conservationists

Scalable, low-cost deployment

Privacy-preserving data collection

Conclusion

Edge AI is no longer a niche optimization—it’s a foundational shift in how intelligent systems are built and deployed. By enabling real-time, privacy-aware, and decentralized decision-making, it’s unlocking new possibilities across industries.

As trends like federated learning, TinyML, and 5G integration accelerate, Edge AI will become central to the next generation of intelligent infrastructure. The edge is no longer the frontier—it’s the new core.